A01Novel architecture and learning rules inspired by cortical microcircuits

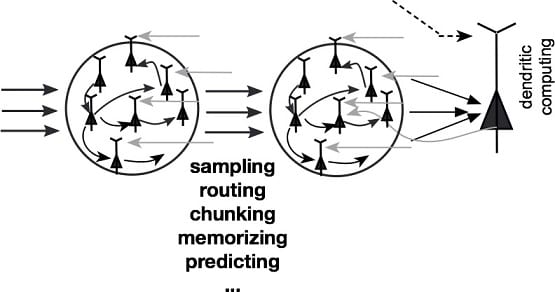

The brain has many biological characteristics of which computational roles and functional implications for AI are only poorly understood. For instance, is spike code advantageous over rate code in neural computation and learning? Are spikes useful for improving AI technologies? Do dendrites of neurons play an active role in computation and learning at the network level or do they just add a biological complexity unimportant for network-level functions? In this project, we explore answers to these questions. We will investigate novel learning rules for structural plasticity of excitatory and inhibitory synapses, explore the roles of dendrites in processing externally and internally-driven information and memory, formulate novel learning rules for deep-layer networks, model the dynamical circuit mechanisms of information routing between brain regions, and extend reservoir computing to a broader range of cognitive problems. The brain has machinery to model the external world, such as hierarchical Bayesian computation by cortical circuits and compressed sequence processing by hippocampus and cortico-basal ganglia loops. Our goal is to understand the network mechanisms of efficient and flexible computations by the brain and to open novel applications of such mechanisms.