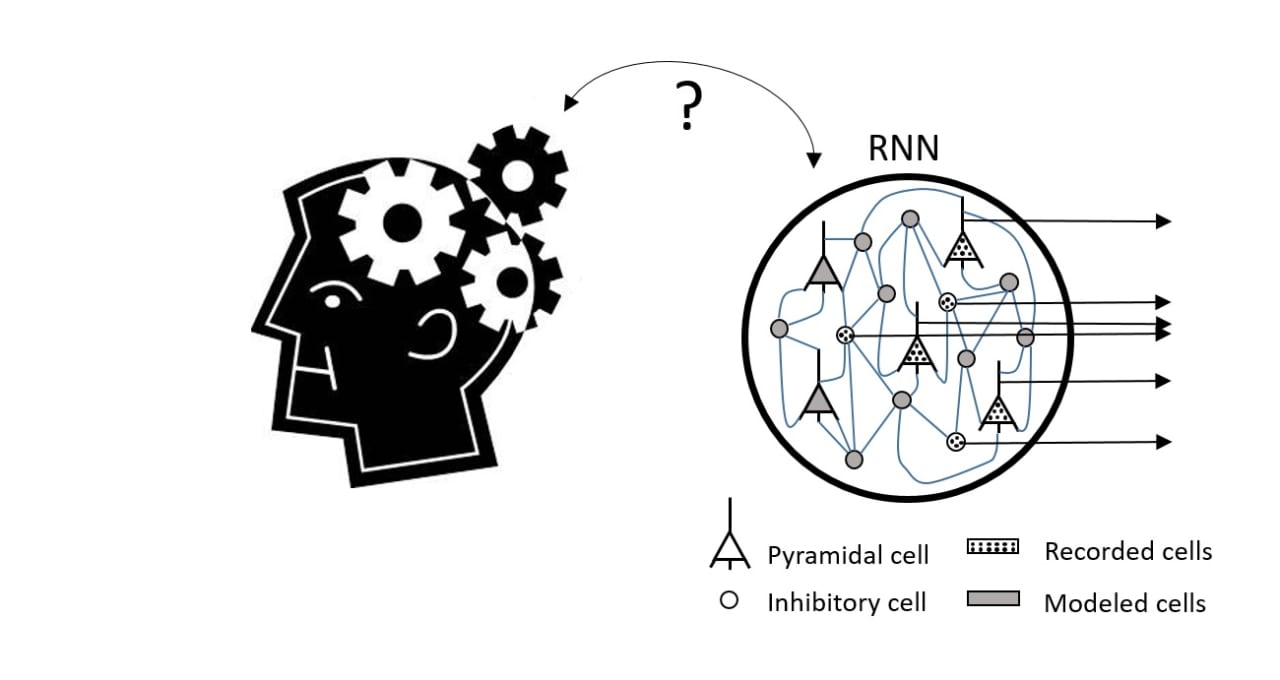

A01Using Recurrent Neural Networks to Study Neural Computations in Cortical Networks

The Neuroscience field has recently rekindled its interest for the use of artificial neural networks to model neural responses. Boosting this trend have been important advances in hardware technology, the refinement of machine-learning algorithms, and the availability of large dataset. At the same time, there have been technological innovations for simultaneous recordings from many neurons, leading as well to the creation of large neural databases. Powerful computational tools are now needed to model such neural big-data and to formulate a unifying theoretical framework capable to reveal the underlying computations. To this end, recurrent neural networks (RNN) are becoming increasingly popular. Their structural and dynamical properties are directly inspired by the recurrent architecture of cortical circuits, thus potentially providing an ideal platform to model large-scale recordings. However, besides impressive data-fitting, it remains unclear what classes of dynamical problems RNN are best suited for that cannot be addressed using simpler theoretical frameworks, making it doubtful whether RNN can boost our knowledge on fundamental principles of neural computations. In our research we will examine how RNN can be used to answer key questions on sensory processing and decision-making that traditional doctrines have not been able to elucidate. To this end we are focusing on the study of response variability, across time and neurons, and on the large spontaneous variability observed in “unstimulated” cortical networks. These forms of variability have been traditionally considered a source noise to get-rid of via spatial and/or temporal averaging. Instead, we hypothesize they reflect the evolution of the network along dynamical dimensions involved in fundamental aspects of the computations. To validate our hypothesis, we are combining powerful experimental technologies (two-photon imaging, optogenetics) with modeling methods based on RNNs. A distinguishing element of our work is the use of tools to perturb the neural dynamics at the single-cell spatial resolution. This technology allows us to perform causal experimental validations of model predictions, representing a major step forward for the elucidation of neural principles, compared to standard correlative methods. Overall, our research aims to demonstrate that RNNs are mature and interpretable computational tools for much needed unification theories of neural computation.